My home infrastructure is lacking a powerful enough core server to run what I need. This is my journey to Elara.

Present Situation

Right now, my home server is an old custom-built server from back when I owned a MSP. It’s name is Worf and he’s served me well for the last few years, but his age and lackluster hardware has been showing recently:

- OS: Container Linux (formerly known as CoreOS) – a now defunct lightweight OS from Red Hat that provides minimal functionality for containerized infrastructure.

- Processors: 2 x AMD Athlon 64 X2 Dual Core 5600+ (2.8GHz/core)

- Memory: 8GB DDR2 RAM

- Storage: 2 x 4TB Seagate Barracuda 7200RPM hard drives (these are fairly new and will be carried over)

When Worf was built probably 10+ years ago, these specs were perfect for my client’s file storage needs. But, as with most things technology, it didn’t age well and Worf is now a senior citizen needing full decommissioning.

The Mission

At this stage, Worf needs to be replaced and his replacement needs to be robust enough to handle several more containers, but not too powerful since additional servers will be built to expand my infrastructure.

The eventual use for this server will be as the media and file server with lots and lots of data and need for high-speed access.

In my typical fashion with my major home hardware (except with Worf), this server will be named after one of Jupiter’s moons Elara. In Greek mythology, Elara is the mother of the giant Tityo and that seemed fitting since this server will be eventually be broken off into children carrying the more mission critical roles.

Planning

As with any good plan, you start with a list of requirements that need to be met and build from there and these are my baseline requirements for Elara:

- Powerful enough to run 15+ services on a normal load, but 30+ initially

- Rack mountable (preferable, but not an absolute requirement)

- Not deafeningly loud

- Fairly power efficient

- Budget of $900 before taxes and shipping

From those requirements, I’ve come up with the following specs:

- Intel-based* CPU with at least 20GHz of combined processing power

- Minimum of 32GB of RAM, but 64GB is preferable

- DDR4 RAM for the access speed

- Dual gigabit networking

- Room for at least 8 hard drives

- At least 1 M SSD

- Using unRAID as the OS

* Typically, I’m an AMD guy. However, servers are a different animal and I do prefer Intel because of their enterprise track record, better heat displacement, and better performance under constant loads.

The final specs iteration:

- 1 x Intel Xeon E5 (between 2630 and 2667)

- 2 x 32GB DDR4-2133 RAM

- Dual gigabit networking

- At least 8 SATA connections

- 8TB 7200RPM of storage

- 1 x largest capacity drive for parity

- 1 x 1TB SSD for caching (preferable, but not absolute)

Options

Okay, specs are done. Time to start engineering. I’ve come up with a few different options for building Elara.

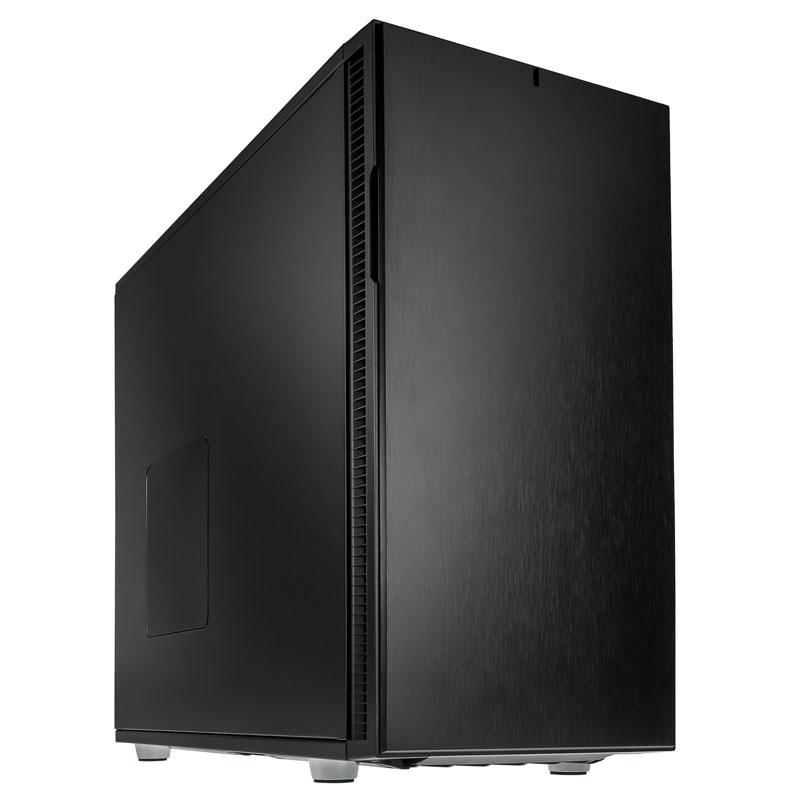

Custom Tower

As a “computer guy” (as the non-computer folks call me), of course I had to look into building Elara myself. While it wouldn’t be rack mountable, it would be pretty and aesthetics is key.

I popped over to PC Parts Picker and built Elara.

She’s not flashy, but the case is a solid black aluminum body with lots of potential with vinyl and a little modification for LEDs.

This option comes to $1,489.40 before tax and shipping. Although, with my two current Barrcuda drives, the price drops to $1,349.44.

That’s a big oof at that price. My budget for this build is $900, so we’re over by nearly $500.

Although having a server that’s rack mountable wasn’t an absolute requirement, it is still an important preference to consider since there will eventually be multiple servers.

Dell PowerEdge R630

With my enterprise IT credentials, I’ve always loved Dell’s servers. (Not their consumer side, but they’re warming up to me again.) My next stop was the Dell PowerEdge R630.

This 13th generation of PowerEdge came out around 2016 and has since been discontinued by Dell so it’s no longer available for purchase on their site, but it’s still a staple generation for general virtualization especially for home users.

I was lead to Orange Computers by r/homelab. Their website is a bit dated, but their customization is on par with the Dell website.

The R630 is a 1U rack-mounted server and I configured it with the following:

- 1 x Intel Xeon E5-2640 v3 2.6GHz processor

- 2 x 32GB (64GB) DDR-2133 RAM

- HBA330 non-RAID pass through*

- 8 x empty 2.5″ drive bays

- iDRAC 8 Express

- 4-port gigabit ethernet network adapter

- Single 750W PSU

- Sliding rail kit with cable management arm

- Metal faceplate

* To use any type of software NAS like unRAID, you either need to be able to flash the PERC with IT firmware or buy the HBA330 pass through. I’m risk averse, so I chose the fool-proof option since I’m able to configure this myself.

The biggest cons for the R630 is the smaller form factor (being a 1U server) means it’s louder and can only use 2.5″ hard drives. I wouldn’t be able to carry over my 3.5″ drives and 2.5″ drives have a lower data read speed (due to less sectors on the platter) and are typically more expensive.

This option comes to $851 before taxes and shipping. Tack on an additional $1,890 for 7 2TB 2.5″ hard drives or $500 for 8 1TB 2.5″ drives (and nearly halving the storage requirement). Either way, this option is way over budget, too.

Dell PowerEdge R730

I moved on to the PowerEdge R730. It’s in the same generation as the R630, but is the model above.

The R730 is a 2U rack-mounted server and I configured it the same as the R630 above except for the following:

- Large Form Factor (LFF) for 3.5″ hard drives

- 8 x empty 3.5″ drive bays

This option comes to $1,042 before taxes and shipping. This about $100 over budget, but what’s IT without at least a little cost overrun. 😅 I would also need to purchase 2 additional 4TB drives adding a further $220 to this cost overrun.

The Small Form Factor (SFF) version of this model is under budget at $822, but there’s the additional $1,980 or $500 (with lesser storage) for the drives.

I was also able to find the R730 LFF on MET Servers (also a r/homelab link) for $955, but their only PERC option was the H730. You can get that PERC into HBA mode, so it shouldn’t be an issue.

Right now, the LFF is currently out of stock with both Orange and MET. I’m waiting on both to let me know when (or, really, if) it will be back in stock.